Discover Summer Research Opportunities at the University of Toronto

The Statistical Sciences Research Program (UTSSRP) invites Canada’s top statistics, data sciences, and mathematics undergraduates to engage in a transformative research experience. This program provides a unique blend of theoretical study and practical research, supervised by some of the foremost academics in the field.

2025 UTSSRP Cohort

2025 UTSSRP Cohort

|

|

|

| Collaborative Research Experience | Intensive Short Courses | Research Seminars |

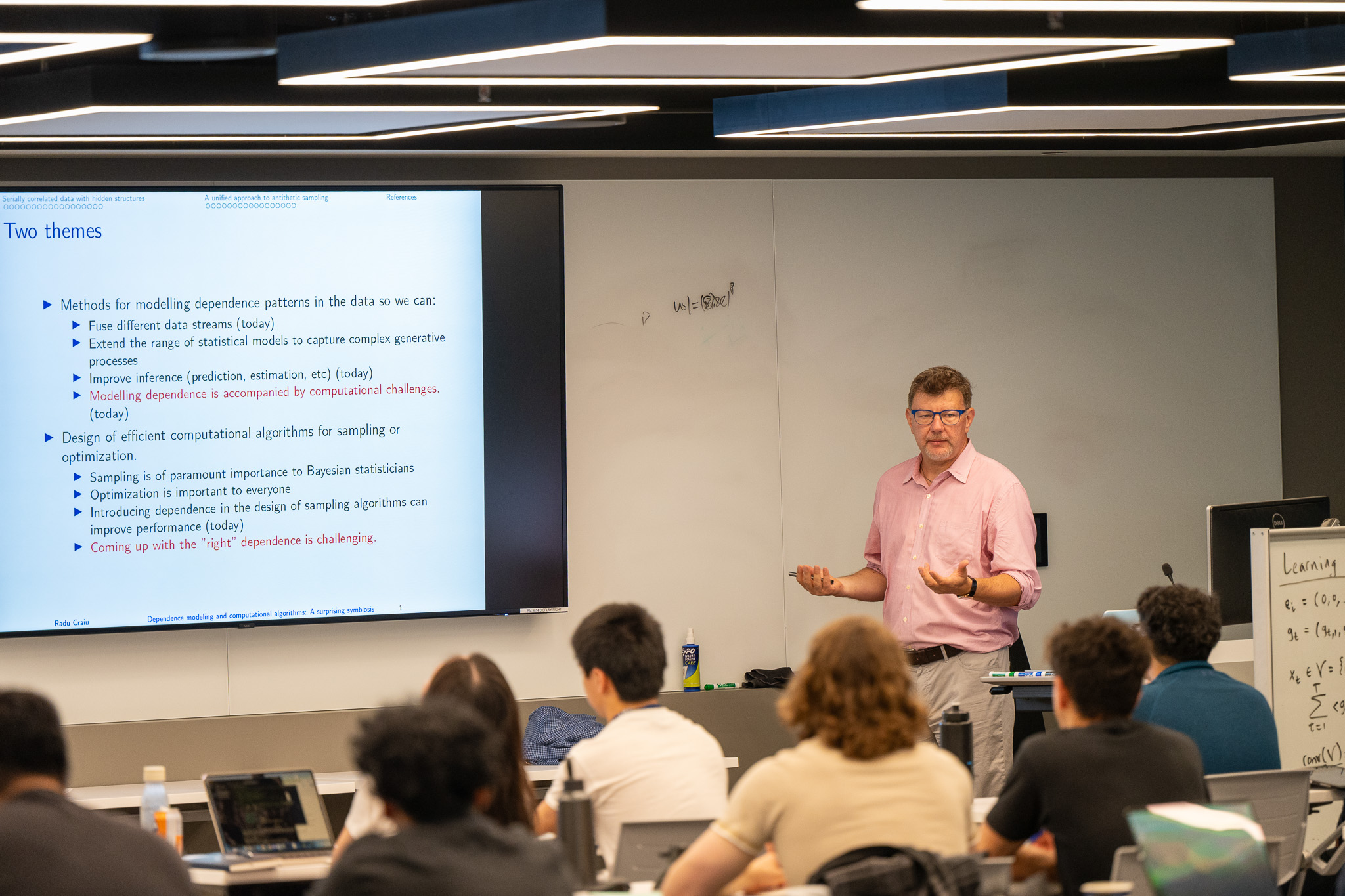

| Students will collaborate in teams to tackle either theoretical or applied research projects under our esteemed faculty's guidance and doctoral candidates' mentorship. The program culminates with participants presenting their findings through a lightning talk and a poster session during the final day’s celebratory reception. | Our program includes a series of engaging short courses that delve into both theoretical and applied aspects of statistics, delivered by internationally acclaimed faculty members. These courses are designed to provide intensive, graduate-level instruction in key statistical methodologies and frameworks. | Our summer research program features a series of captivating seminars where faculty members will present their cutting-edge research, providing students with a window into the vibrant scholarly environment at the University of Toronto. These seminars not only highlight current scientific inquiries but also explore the varied career paths that our PhD graduates follow. |

2026 Program Guide

Dates: Monday June 8 - Friday June 19, 2026

Location: Department of Statistical Sciences, 9th floor, 700 University

-

Opening remarks will be in room 9014

-

All courses, tutorials, and seminars will be in rooms 9014, 9016, 9173 and 9199

-

Lunches will be provided in room 9016

-

Research time will be in different rooms for each group (refer to table below the schedule)

Week 1: June 8-12, 2026

|

|

Sun June 7 |

Mon June 8 |

Tues June 9 |

Wed June 10 |

Thurs June 11 |

Fri June 12 |

Sat June 13 |

|

9:30-11:30 |

Travel and Check in to Accommodations |

Opening Remarks Applied Course

(Introduction to Statistical Methods in Ecology, Gracia) |

Theory Course

(Robust Statistical Methods, Keith)

|

Applied Course

(Introduction to Quasi- and Pseudo-Random Number Generation, Gracia)

|

Theory Course

(Robust Statistical Methods, Keith)

|

Applied Course

(High-dimensional data, Elena) |

|

|

11:30-1:30 |

Lunch |

Lunch |

Lunch |

Lunch |

Lunch |

||

|

1:30-2:30 |

Campus Tour |

Seminar Jun Young Park |

Seminar Thibault Randrianarisoa

|

Seminar Nancy Reid |

Seminar Students |

||

|

2:30-5:30 |

Meeting with Research Supervisor Research |

Research |

Research |

Research |

Research |

Week 2: June 15-19

|

|

Sun June14 |

Mon June 15 |

Tues June 16 |

Wed June 17 |

Thurs June 18 |

Fri June 19 |

Sat June 20 |

|

9:30-11:30 |

|

Theory Course

(Mathematics of Generative Models, Ricardo)

|

Applied Course

(Bayesian Inference, Josh)

|

Theory Course

(Mathematics of Generative Models, Ricardo)

|

Applied Course

(Bayesian Inference, Josh)

|

Theory Course

(Stochastic differential equations, Sebastian)

|

|

|

11:30-1:30 |

Lunch |

Lunch |

Lunch |

Lunch |

Lunch |

||

|

1:30-2:30 |

Seminar Jeff Rosenthal |

Seminar Meredith Franklin |

Career panel |

Seminar Silvana Pesenti |

Prep for Reception |

||

|

2:30-5:30 |

Research |

Research |

Research |

Research |

Closing Reception & Posters |

Teaching:

- Theory courses: Ricardo Baptista, Sebastian Jaimungal, Keith Knight

- Applied courses: Gracia Dong, Josh Speagle, Elena Tuzhilina

Projects:

- Theory: Ricardo Baptista, Sebastian Jaimungal, Stanislav Volgushev

- Applied: Meredith Franklin, Josh Speagle, Elena Tuzhilina

Talks:

Theory: Robust Statistical Methods, Keith Knight

This material introduces the fundamental ideas of robust statistical methods and contrasts them with classical statistical approaches. The discussion begins with key concepts such as Fisher consistency, qualitative robustness, the influence curve, and the breakdown point, which provide a framework for evaluating the robustness of statistical procedures. The focus then moves to location estimation, including the contaminated normal model and robust estimators such as M-estimators and L-estimators, with emphasis on efficiency and bias robustness. Regression estimation is considered next, including M-estimation and high-breakdown methods such as least median of squares and S-estimation. Finally, the material covers multivariate robust methods, including multivariate medians, data depth, and robust covariance estimation, which are important tools for analyzing multivariate data in the presence of outliers or model deviations.

Theory: Mathematics of Generative Models, Ricardo Baptista

Characterizing probability distributions is fundamental to representing uncertainty and lies at the core of machine learning and decision-making. Generative modeling provides a flexible framework for approximating complex, high-dimensional probability distributions from data by representing them as transformations of simple reference distributions that are easy to sample from, such as a standard Gaussian. In recent years, generative modeling techniques have rapidly advanced, enabling applications ranging from photo-realistic image synthesis and natural language processing to scientific domains such as drug discovery and numerical weather prediction. This course presents the mathematical foundations of generative models, emphasizing their connections to optimal transport theory. We will introduce three widely used classes of models in machine learning: normalizing flows, generative adversarial networks, and score-based diffusion models. Students will have an opportunity to implement these models and to assess their respective strengths and limitations using numerical experiments. Lastly, we will provide a brief overview of active research directions, with the goal of motivating students to engage with and contribute to this rapidly evolving field.

Theory: Stochastic differential equations, Sebastian Jiamungal

This crash-course introduces stochastic differential equations (SDEs) as a mathematical framework for modeling systems influenced by randomness. Beginning with Brownian motion and its fundamental properties, the course develops stochastic integrals and Itô calculus, leading to the formulation and analysis of SDEs. Emphasis is placed on intuition, modeling, and interpretation alongside key theoretical results. Applications and coding examples are woven throughout the course, illustrating how SDEs arise in diverse settings including climate models, asset price dynamics and risk modeling in finance, and data generating models in machine learning.

Applied: Bayesian Statistics, Josh Speagle

This course provides a practical introduction to Bayesian statistical inference, emphasizing hands-on learning and intuition. The course will cover fundamentals such as priors, likelihoods, and posteriors, as well as computational methods for estimating posterior distributions and posterior predictive checking. Students will also learn about Bayesian linear regression and hierarchical modelling, which enables inference at multiple levels of a structured problem simultaneously. Throughout the course, concepts are illustrated with scientific applications, including examples from astronomy research. No prior experience with Bayesian statistics is assumed.

Applied: Introduction to Quasi- and Pseudo-Random Number Generation, Gracia Dong

This crash course provides an introduction to Monte Carlo and Quasi-Monte Carlo methods. We will introduce pseudo- and quasi- random number generation, review the inversion method for random variate generation, and variance reduction techniques. We will finish the course off with financial applications, such as option pricing.

No prior experience with simulation methods is required. Basic R or Python coding experience is assumed.

Applied: Introduction to Statistical Methods in Ecology, Gracia Dong

This course introduces methods commonly used in statistical ecology. Students will be introduced to NIMBLE (Numerical Inference for statistical Models using Bayesian and Likelihood Estimation), a system for writing hierarchical statistical models (R package “nimble”). Students will work through an example using simulated capture-recapture data, including adding covariates for heterogeneity in capture and survival probabilities.

This course assumes the student has prior R coding experience.

Applied: Curse of Dimensionality, Elena Tuzhilina

We will discuss the curse of dimensionality, a fundamental challenge that arises when working with high-dimensional data. As the number of variables increases, data points become sparse, distances between observations become less informative, and many statistical and machine learning methods begin to perform poorly. Through visual examples and simple calculations, we will illustrate phenomena such as distance concentration, sparsity of data, and the rapid growth of volume in high dimensions. The goal is to build intuition about high-dimensional data and to understand why many modern statistical methods are specifically designed for high-dimensional settings.

Sebastian Jaimungal, Model Combination, Projections, and Clustering of Probability Measures

This project is built around the notion of model combination, projections, and clustering of probability measures. In the usual k-means setup, one is interested in understanding how unlabeled data are clustered. Data in these setting are usually viewed as being observations sampled from R^n. This project instead investigates a setting where the data are themselves probability measures - e.g., provided by a number of experts or by estimation of measures from different sets of data - and we study how to combine probability measures, how to project the probability measures onto a space of constraints (that represent, e.g., beliefs that the agent has), and how to cluster those measures. While the focus will be on theory, the project has implications for climate science where there is a reliance on ensembles of global climate models, mathematical finance where agent's preferences can be embedded into their models, and distributional clustering in machine learning. We may have time to investigate these applications and use simulation and deep learning approaches to investigate consequences of the theory.

Joshua Speagle, Establishing Trustworthy Scientific Inference

Scientists mean many things when they try to quantify "uncertainty" in a measurement. This project will explore ways that we conceptualize uncertainty and how this can impact downstream scientific inference, with a focus on how to translate between Bayesian and Frequentist goals and how to work with complex, "black box" estimators. Ideas and applications will be drawn from https://arxiv.org/abs/2508.02602, focusing on improving how we estimate the physical properties and chemical compositions of stars from millions of stellar spectra collected by the DESI, SDSS, and LAMOST surveys.

Elena Tuzhilina, Batch Effect Correction in Single-Cell Data

Single-cell data are often collected across different experiments or time points. This can introduce technical differences, known as batch effects, that are not related to true biological variation. These batch effects can make it difficult to correctly analyze and interpret the data. In this project, we will focus on the canonical correlation analysis (CCA) method implemented in Seurat, which is widely used for batch correction and data integration in single-cell studies. We will study how well Seurat CCA removes technical variation while preserving meaningful biological patterns. We will also compare its performance to a few other common methods and explore possible ways to improve the results. The goal is to better understand the strengths and limitations of Seurat CCA and identify potential improvements for batch correction in single-cell datasets.

Ricardo Baptista, Solving Inverse Problems with Contrastive Learning

Description: Inverse problems arise throughout science and engineering, from medical imaging to geophysics, when recovering signals or parameters from indirect and noisy observations. Classical approaches typically rely on explicit regularization and hand-crafted priors (e.g., sparsity or smoothness) to reconstruct signals, but these assumptions often fail to capture the complex structure of modern high-dimensional data. This project explores contrastive learning, a data-driven framework behind models such as CLIP, which has been immensely successful in data science for solving inverse problems that map text to images and vice versa. We will develop contrastive learning methods for representative scientific inverse problems and explore strategies to improve reconstruction quality and uncertainty quantification in comparison to classical and competing methods, following some ideas proposed in: https://arxiv.org/abs/2505.24134.

Meredith Franklin, Bootstrap Ensemble and Meta-Learning for Air Pollution Prediction in Bangladesh Using Satellite and Meteorological Data

This project will develop a predictive modeling framework for estimating particulate matter in Bangladesh using remote sensing and climate data. Students will each build a distinct machine learning model using data that includes aerosol-related satellite observations from two different satellite instruments, land-use, meteorology, and spatiotemporal trend features. To strengthen predictive performance and quantify uncertainty, the project will incorporate bootstrap ensemble learning, in which the data are repeatedly resampled and the individual models are refit across bootstrap samples to generate a distribution of predictions rather than a single estimate. These resampled predictions will then be combined at the ensemble stage using both bootstrap-estimated weights, which assign greater influence to models with stronger predictive performance, and a stacked meta-learning approach that learns how to optimally combine the individually developed models. This framework will allow students to compare how different modeling approaches capture nonlinear relationships, spatial variability, and temporal patterns in air pollution, while also assessing the stability of their predictions across resamples. The final result will be an ensemble PM2.5 prediction that is designed to improve overall accuracy and robustness, together with a measure of predictive uncertainty derived from variation across bootstrap fits, model types, and ensemble combinations.

Stanislav Volgushev, Benign overfitting in binary classification

Description: Classical statistical theory teaches us that there is a trade-off between approximation bias and the variance of estimators, and that having too many model parameters leads to poor estimators. This leads to the prediction that estimators which perfectly interpolate the data should suffer from poor performance. This understanding has been challenged by recent numerical and theoretical results which show that even estimators that perfectly interpolate the data can have good statistical properties. In this project, we will explore the theoretical underpinnings of benign overfitting in the setting of binary linear classification. We will also validate the theoretical predictions through simulations.

Jun Young Park, Statistical perspectives on the replication crisis in scientific research

Since 2010, there has been serious concern in the scientific community that many research findings, often supported by seemingly rigorous quantitative evidence, are not replicable. This issue spans various fields, including but not limited to medicine, psychology, health, and the social and natural sciences. Using the lens of statistics, we will evaluate why this replication crisis has occurred and examine past research in psychology and neuroscience in which study data or statistical analyses were mishandled, leading to misleading conclusions.

Thibault Randrianarisoa, Beyond Classical Kernel Estimators: Richer Approximation Properties in Bayesian Nonparametrics

Bayesian methods are widely adopted in modern statistics for practical reasons, like their natural framework for uncertainty quantification. However, they also offer some theoretical advantages. This talk will first review the classical mathematical tools used to study the convergence of posterior distributions in nonparametric inference. Building on this, we will demonstrate a distinct theoretical advantage of Bayesian kernel methods over their frequentist counterparts. Specifically, in density estimation using Gaussian mixture models, the posterior adaptively contracts at the optimal rate for true densities of any smoothness level. Remarkably, classical frequentist Gaussian kernel density estimators fail to achieve these optimal rates for highly smooth functions, suggesting an advantage of Bayesian methods.

Nancy Reid, Statistical Theory

Through a series of examples I’ll show how statistical theory impacts statistical practice, and describe some areas where theory is currently making rapid advances.

Jeff Rosenthal, The Magic of Monte Carlo

Monte Carlo algorithms have completely revolutionised statistical computation, allowing previously intractable models to be easily solved. In particular, Markov chain Monte Carlo (MCMC) algorithms have allowed for the use of Bayesian inference in a multitude of settings. One reason for their success is a strong theoretical foundation, allows us to validate the basic algorithms, provide numerous extensions and generalisations of the algorithms, clarify different algorithm options and tunings, and evaluate the results. This talk will present simple examples to illustrate the workings of MCMC. It will emphasise the impact and importance of various theoretical MCMC issues including ergodicity, qualitative and quantitative convergence rates, optimal scalings, and adaptive MCMC.

Meredith Franklin, Spatiotemporal statistical and machine learning methods for environmental modeling

This work develops spatiotemporal statistical and machine learning methodology for environmental modeling, with a focus on estimating complex exposure surfaces from multi-source data and quantifying associated uncertainty. We consider settings where observations are sparse, misaligned across space and time, and measured at heterogeneous supports and scales (e.g., monitors, satellites, climate models), motivating models that jointly address dependence, nonstationarity, and missingness. Our approach combines modern spatiotemporal statistics (Gaussian processes, functional representations, and spatial error structures) with machine learning algorithms for high-dimensional predictors and large datasets. We show several research applications, and highlight how the exposure surfaces we generate connect with downstream health effects and risk assessments.

Silvana Pesenti, Risk assessment under uncertainty and ambiguity

Assessing risk is of widespread importance in finance and insurance as well as in everyday life. Decisions however are typically based on data driven models that exhibit uncertainty arising from, e.g., data misspecification. Moreover, in many situations the choice of risk measure can often not be uniquely elicited, leading to ambiguity in decision making. In this talk, I will introduce the framework of risk assessment and illustrate how to make robust decisions in setting where uncertainty and ambiguity are prevalent.

TBD

- Mufan Li, Assistant Professor at the Department of Statistics and Actuarial Science at the University of Waterloo and a Faculty Affiliate at the Vector Institute.

- Kevin Zhang, Research Machine Learning Scientist, Layer 6 at the AI Research Centre

- Michael Moon, Assistant Professor, Teaching Stream at the Department of Statistical Science at the University of Toronto

2026 Supervisors

|

Ricardo Baptista: Focuses on probabilistic modeling and computational inference, developing generative and measure-transport methods for complex scientific and engineering problems. |

Meredith Franklin: Develops spatio-temporal statistical and machine-learning tools to estimate environmental exposures and assess their impacts on human health. |

Sebastian Jaimungal: Works on stochastic modeling, machine learning, and optimization, with applications in finance, insurance, and operations under uncertainty. |

|

Josh Speagle: Combines astronomy, statistics, and machine learning to build scalable models for mapping stellar populations and understanding galaxy formation |

Elena Tuzhilina: Studies high-dimensional inference, statistical networks, and computational methods for modern data-intensive problems. |

Stanislav Volgushev: Works in theoretical and computational statistics, focusing on quantile regression, empirical processes, copulas, and methods for high-dimensional data. |

Program Information

Eligibility

To be eligible for the summer school, applicants must:

- Be Canadian citizens or permanent residents.

- Be 18 years of age or older at the beginning of the program.

- Be full-time university students currently in sophomore or junior year (starting 3rd or 4th year in Fall 2026).

- Have an average grade of A- (3.7) or above in mathematics and statistics courses

- Be available to attend the whole two-week event.

- Submit a UTSSRP Application Form

- A CV or resume

- Transcript(s) (unofficial ones are accepted)

- One reference letter from a professor who can comment on your academic and research abilities and potential. The recommendation letter should be sent by the writer directly to utssrp.statistics@utoronto.ca by February 15, 2026. (applications now closed)

- Select up to 3 preferred supervisors for the research project during the program. Note: We do not guarantee that all students will be matched with their preferred supervisors. However, all projects will be fun and valuable for the students’ development.

To be considered, please email utssrp.statistics@utoronto.ca to receive the upload link to a secure folder.

Frequently Asked Questions (FAQs)

No, we will book your flight for you. We will be in touch after your acceptance and supporting documents have been submitted.

Accommodations will be provided at a University of Toronto residence and we have already reserved rooms for the UTSSRP. Full details and logistics will be shared upon acceptance.

We will provide a light breakfast and lunch on the weekdays of the UTSSRP. You are responsible for your dinners and weekends.

Sunday June 7, 2026.

We are planning a virtual welcome event and will be in touch with those details once everyone in the cohort is confirmed.

The Summer Research Program will include a select group of students from across Canada.